I have seen many people on Quora looking for reliable sources that can provide actual Microsoft DP-200 exam questions. Therefore, this blog has compiled the latest DP-200 exam questions and DP-200 PDF dumps free download. I also choose the Reliable and actual DP-200 learning source for you https://www.pass4itsure.com/dp-200.html DP-200 exam questions (QA Dumps: 207).

Choose the version that suits your needs about Microsoft DP-200 exam questions

- Microsoft DP-200 exam questions video

- Microsoft Azure Data Engineer Associate DP-200 exam practice question

- DP-200 Exam Dumps (PDF) 2020

Microsoft Azure Data Engineer Associate DP-200 exam practice question

You can check the sample exam with 13 questions here.

QUESTION 1

You need to process and query ingested Tier 9 data.

Which two options should you use? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A. Azure Notification Hub

B. Transact-SQL statements

C. Azure Cache for Redis

D. Apache Kafka statements

E. Azure Event Grid

F. Azure Stream Analytics

Correct Answer: EF

Explanation:

Event Hubs provides a Kafka endpoint that can be used by your existing Kafka based applications as an alternative to

running your own Kafka cluster.

You can stream data into Kafka-enabled Event Hubs and process it with Azure Stream Analytics, in the following steps:

1.

Create a Kafka enabled Event Hubs namespace.

2.

Create a Kafka client that sends messages to the event hub.

3.

Create a Stream Analytics job that copies data from the event hub into an Azure blob storage. Scenario:

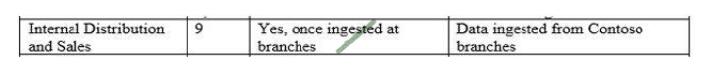

\

\

Tier 9 reporting must be moved to Event Hubs, queried, and persisted in the same Azure region as the company\\’s

main office

References: https://docs.microsoft.com/en-us/azure/event-hubs/event-hubs-kafka-stream-analytics

The real-time processing layer must meet the following requirements:

Ingestion:

1. Receive millions of events per second

2. Act as a fully managed Platform-as-a-Service (PaaS) solution

3. Integrate with Azure Functions

Stream processing:

1. Process on a per-job basis

2. Provide seamless connectivity with Azure services

3. Use a SQL-based query language

Analytical data store:

1. Act as a managed service

2. Use a document store

3. Provide data encryption at rest

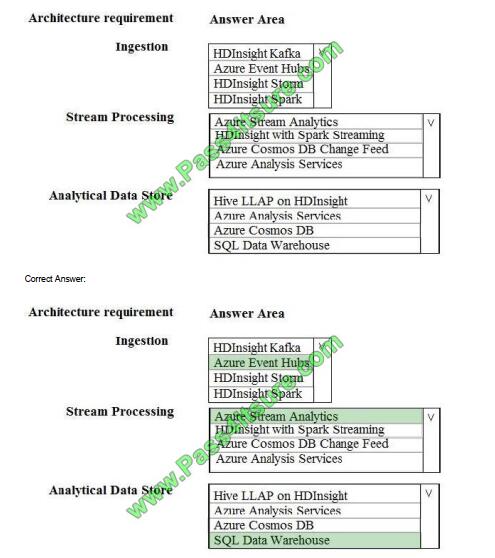

You need to identify the correct technologies to build the Lambda architecture using minimal effort. Which technologies

should you use? To answer, select the appropriate options in the answer area.

NOTE: Each correct selection is worth one point.

Hot Area:

Box 1: Azure Event Hubs

This portion of a streaming architecture is often referred to as stream buffering. Options include Azure Event Hubs, Azure IoT Hub, and Kafka.

Incorrect Answers: Not HDInsight Kafka

Azure Functions need a trigger defined in order to run. There is a limited set of supported trigger types, and Kafka is not

one of them.

Box 2: Azure Stream Analytics

Azure Stream Analytics provides a managed stream processing service based on perpetually running SQL queries that

operate on unbounded streams.

You can also use open source Apache streaming technologies like Storm and Spark Streaming in an HDInsight cluster.

Box 3: Azure SQL Data Warehouse

Azure SQL Data Warehouse provides a managed service for large-scale, cloud-based data warehousing. HDInsight

supports Interactive Hive, HBase, and Spark SQL, which can also be used to serve data for analysis.

References:

https://docs.microsoft.com/en-us/azure/architecture/data-guide/big-data/

QUESTION 3

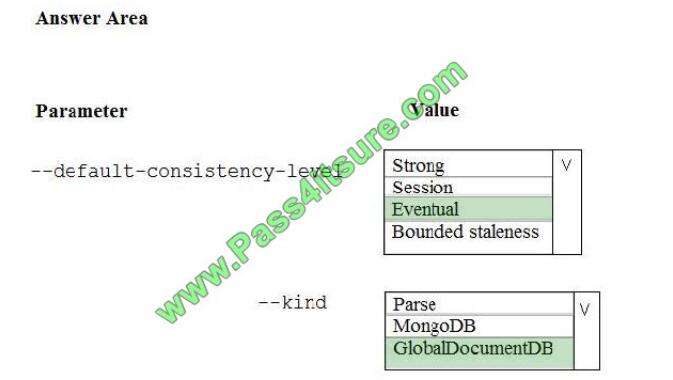

A company is planning to use Microsoft Azure Cosmos DB as the data store for an application. You have the following

Azure CLI command:

az cosmosdb create – Correct Answer:

Box 1: Eventual

With Azure Cosmos DB, developers can choose from five well-defined consistency models on the consistency

spectrum. From strongest to more relaxed, the models include strong, bounded staleness, session, consistent prefix,

and eventual

consistency.

The following image shows the different consistency levels as a spectrum.

Box 2: GlobalDocumentDB

Select Core(SQL) to create a document database and query by using SQL syntax.

Note: The API determines the type of account to create. Azure Cosmos DB provides five APIs: Core(SQL) and

MongoDB for document databases, Gremlin for graph databases, Azure Table, and Cassandra.

References:

https://docs.microsoft.com/en-us/azure/cosmos-db/consistency-levels

https://docs.microsoft.com/en-us/azure/cosmos-db/create-sql-api-dotnet

QUESTION 4

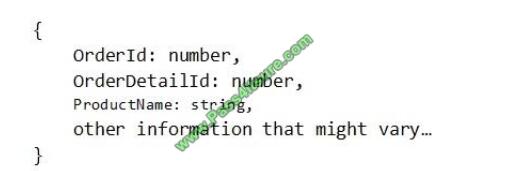

You have a container named Sales in an Azure Cosmos DB database. Sales has 120 GB of data. Each entry in Sales

has the following structure.

The partition key is set to the OrderId attribute.

Users report that when they perform queries that retrieve data by ProductName, the queries take longer than expected

to complete.

You need to reduce the amount of time it takes to execute the problematic queries.

Solution: You change the partition key to include ProductName.

Does this meet the goal?

A. Yes

B. No

Correct Answer: B

One option is to have a lookup collection “ProductName” for the mapping of “ProductName” to “OrderId”.

References: https://azure.microsoft.com/sv-se/blog/azure-cosmos-db-partitioning-design-patterns-part-1/

QUESTION 5

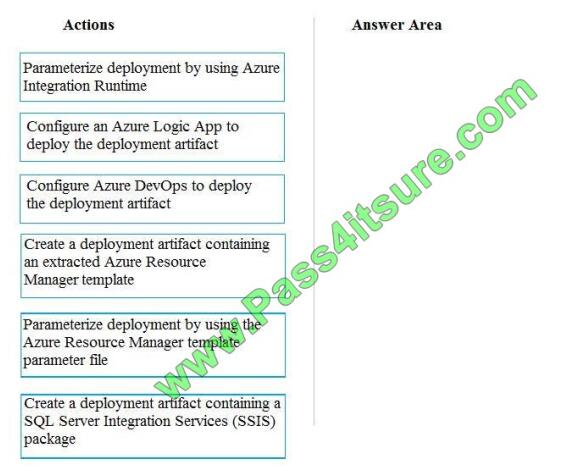

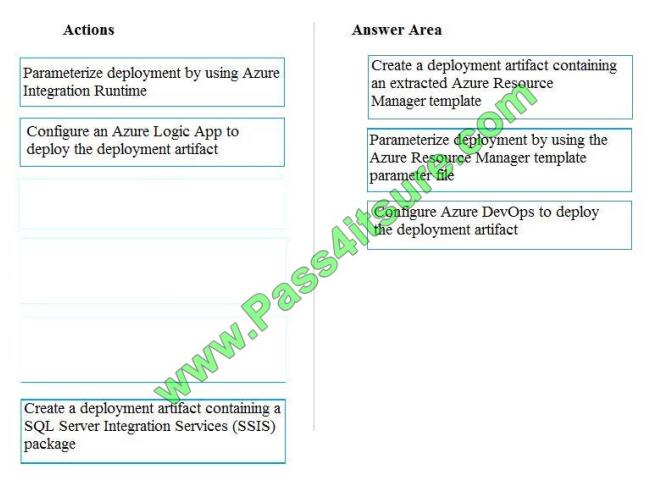

You need to ensure that phone-based polling data can be analyzed in the PollingData database.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions

to the answer are and arrange them in the correct order.

Select and Place:

Correct Answer:

Explanation/Reference:

All deployments must be performed by using Azure DevOps. Deployments must use templates used in multiple

environments No credentials or secrets should be used during deployments

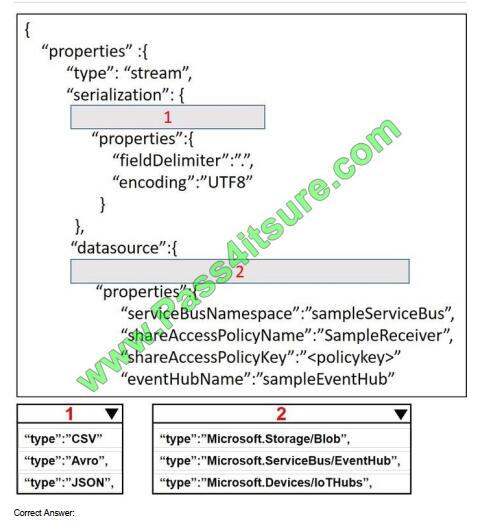

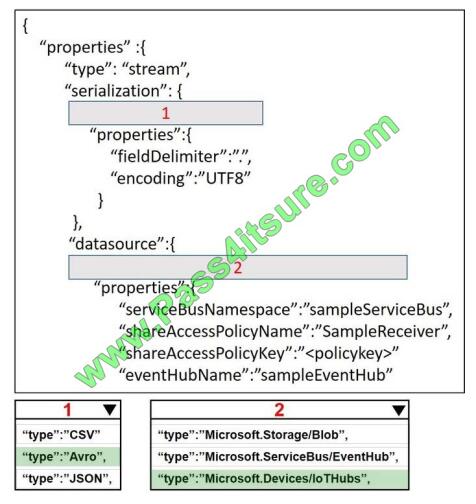

QUESTION 6

A company plans to analyze a continuous flow of data from a social media platform by using Microsoft Azure Stream

Analytics. The incoming data is formatted as one record per row.

You need to create the input stream.

How should you complete the REST API segment? To answer, select the appropriate configuration in the answer area.

NOTE: Each correct selection is worth one point.

Hot Area:

Correct Answer:

QUESTION 7

You manage a solution that uses Azure HDInsight clusters.

You need to implement a solution to monitor cluster performance and status.

Which technology should you use?

A. Azure HDInsight .NET SDK

B. Azure HDInsight REST API

C. Ambari REST API

D. Azure Log Analytics

E. Ambari Web UI

Correct Answer: E

Ambari is the recommended tool for monitoring utilization across the whole cluster. The Ambari dashboard shows easily

glanceable widgets that display metrics such as CPU, network, YARN memory, and HDFS disk usage. The specific

metrics shown depend on cluster type. The “Hosts” tab shows metrics for individual nodes so you can ensure the load

on your cluster is evenly distributed.

The Apache Ambari project is aimed at making Hadoop management simpler by developing software for provisioning,

managing, and monitoring Apache Hadoop clusters. Ambari provides an intuitive, easy-to-use Hadoop management

web UI backed by its RESTful APIs.

References: https://azure.microsoft.com/en-us/blog/monitoring-on-hdinsight-part-1-an-overview/

https://ambari.apache.org/

QUESTION 8

You plan to create an Azure Databricks workspace that has a tiered structure. The workspace will contain the following

three workloads:

A workload for data engineers who will use Python and SQL A workload for jobs that will run notebooks that use Python,

Spark, Scala, and SQL A workload that data scientists will use to perform ad hoc analysis in Scala and R

The enterprise architecture team at your company identifies the following standards for Databricks environments:

The data engineers must share a cluster.

The job cluster will be managed by using a request process whereby data scientists and data engineers provide

packaged notebooks for deployment to the cluster.

All the data scientists must be assigned their own cluster that terminates automatically after 120 minutes of inactivity.

Currently, there are three data scientists.

You need to create the Databrick clusters for the workloads.

Solution: You create a Standard cluster for each data scientist, a High Concurrency cluster for the data engineers, and a

Standard cluster for the jobs.

Does this meet the goal?

A. Yes

B. No

Correct Answer: B

We would need a High Concurrency cluster for the jobs.

Note:

Standard clusters are recommended for a single user. Standard can run workloads developed in any language: Python,

R, Scala, and SQL.

A high concurrency cluster is a managed cloud resource. The key benefits of high concurrency clusters are that they

provide Apache Spark-native fine-grained sharing for maximum resource utilization and minimum query latencies.

References:

https://docs.azuredatabricks.net/clusters/configure.html

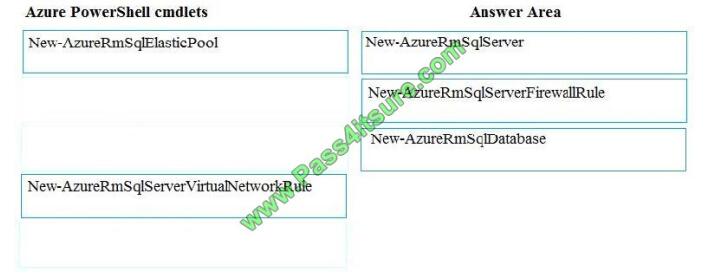

QUESTION 9

You plan to create a new single database instance of Microsoft Azure SQL Database.

The database must only allow communication from the data engineer

Correct Answer:

Step 1: New-AzureSqlServer

Create a server.

Step 2: New-AzureRmSqlServerFirewallRule

New-AzureRmSqlServerFirewallRule creates a firewall rule for a SQL Database server.

Can be used to create a server firewall rule that allows access from the specified IP range.

Step 3: New-AzureRmSqlDatabase

Example: Create a database on a specified server

PS C:\>New-AzureRmSqlDatabase -ResourceGroupName “ResourceGroup01” -ServerName “Server01”

-DatabaseName “Database01

References: https://docs.microsoft.com/en-us/azure/sql-database/scripts/sql-database-create-and-configure-databasepowershell?toc=%2fpowershell%2fmodule%2ftoc.json

QUESTION 10

You develop data engineering solutions for a company.

You need to ingest and visualize real-time Twitter data by using Microsoft Azure.

Which three technologies should you use? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A. Event Grid topic

B. Azure Stream Analytics Job that queries Twitter data from an Event Hub

C. Azure Stream Analytics Job that queries Twitter data from an Event Grid

D. Logic App that sends Twitter posts which have target keywords to Azure

E. Event Grid subscription

F. Event Hub instance

Correct Answer: BDF

You can use Azure Logic apps to send tweets to an event hub and then use a Stream Analytics job to read from event

hub and send them to PowerBI.

References: https://community.powerbi.com/t5/Integrations-with-Files-and/Twitter-streaming-analytics-step-by-step/tdp/9594

QUESTION 11

You have an Azure SQL database named DB1 that contains a table named Table1. Table1 has a field named

Customer_ID that is varchar(22).

You need to implement masking for the Customer_ID field to meet the following requirements:

The first two prefix characters must be exposed.

The last four prefix characters must be exposed.

All other characters must be masked.

Solution: You implement data masking and use a random number function mask.

Does this meet the goal?

A. Yes

B. No

Correct Answer: B

Must use Custom Text data masking, which exposes the first and last characters and adds a custom padding string in

the middle.

References: https://docs.microsoft.com/en-us/azure/sql-database/sql-database-dynamic-data-masking-get-started

QUESTION 12

Contoso, Ltd. plans to configure existing applications to use Azure SQL Database.

When security-related operations occur, the security team must be informed.

You need to configure Azure Monitor while minimizing administrative effort.

Which three actions should you perform? Each correct answer presents part of the solution.

NOTE: Each correct selection is worth one point.

A. Create a new action group to email [email protected].

B. Use [email protected] as an alert email address.

C. Use all security operations as a condition.

D. Use all Azure SQL Database servers as a resource.

E. Query audit log entries as a condition.

Correct Answer: ACD

References: https://docs.microsoft.com/en-us/azure/azure-monitor/platform/alerts-action-rules

QUESTION 13

You have an Azure Storage account and an Azure SQL data warehouse in the UK South region.

You need to copy blob data from the storage account to the data warehouse by using Azure Data Factory.

The solution must meet the following requirements:

Ensure that the data remains in the UK South region at all times.

Minimize administrative effort.

Which type of integration runtime should you use?

A. Azure integration runtime

B. Self-hosted integration runtime

C. Azure-SSIS integration runtime

Correct Answer: A

Incorrect Answers:

B: Self-hosted integration runtime is to be used On-premises.

References: https://docs.microsoft.com/en-us/azure/data-factory/concepts-integration-runtime

Click to view other Microsoft exam questions.

DP-200 Exam Dumps (PDF) 2020

[pdf]Microsoft Azure DP-200 PDF Dumps https://drive.google.com/file/d/1B7VeG5nUq_o-TeTiFf-DX4Fp_DgoXB2a/view?usp=sharing

Why choose Pass4itsure

Pass4itsure Year-round discount code sharing

The latest discount code “2020PASS” is provided below.

This is the official website of DP-200 study guide and update:

https://docs.microsoft.com/en-us/learn/certifications/exams/dp-200

Pass4itsure has free dumps resources that can help you pass the DP-200 exam

2020 Latest DP-200 Exam Dumps (PDF & VCE) Free Share:

https://drive.google.com/file/d/1B7VeG5nUq_o-TeTiFf-DX4Fp_DgoXB2a/view?usp=sharing

P.S.

Share the DP-200 exam preparation here. Practice as many questions as possible. I would recommend that you dump the pdf with DP-200 because it contains most of the questions asked in the real DP-200 exam and all the official answers. This is the link to the DP-200 exam questions practice guide:https://www.pass4itsure.com/dp-200.html has a good list of questions for practicing.